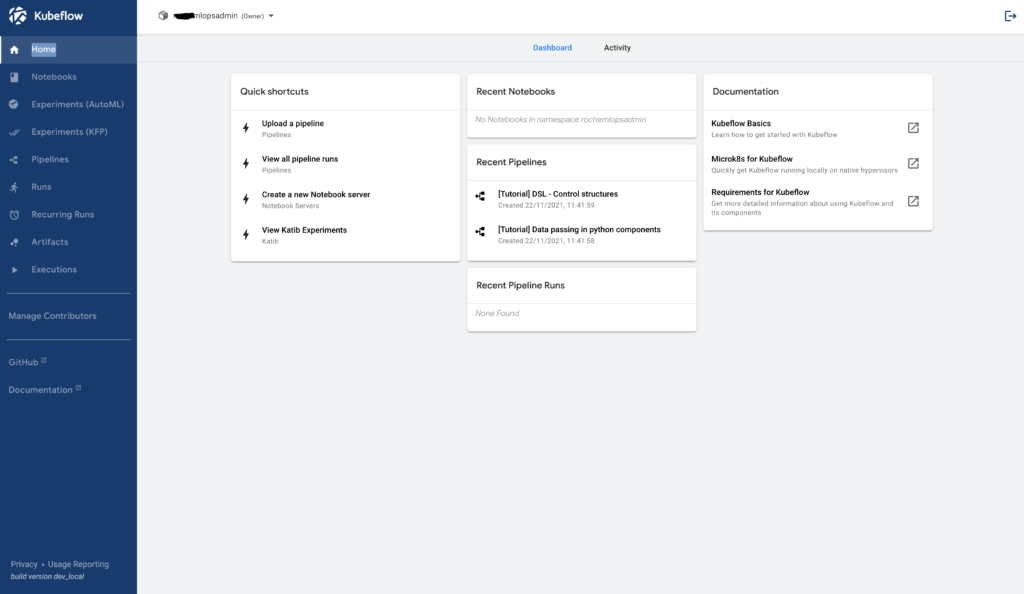

Kubeflow is an Open Source project which is dedicated to making Machine Learning workflow deployments MLOps to Kubernetes, simple, portable and scalable.

https://www.kubeflow.org/#overview

Kubeflow is not a PasS offering and you need to do the setup yourself.

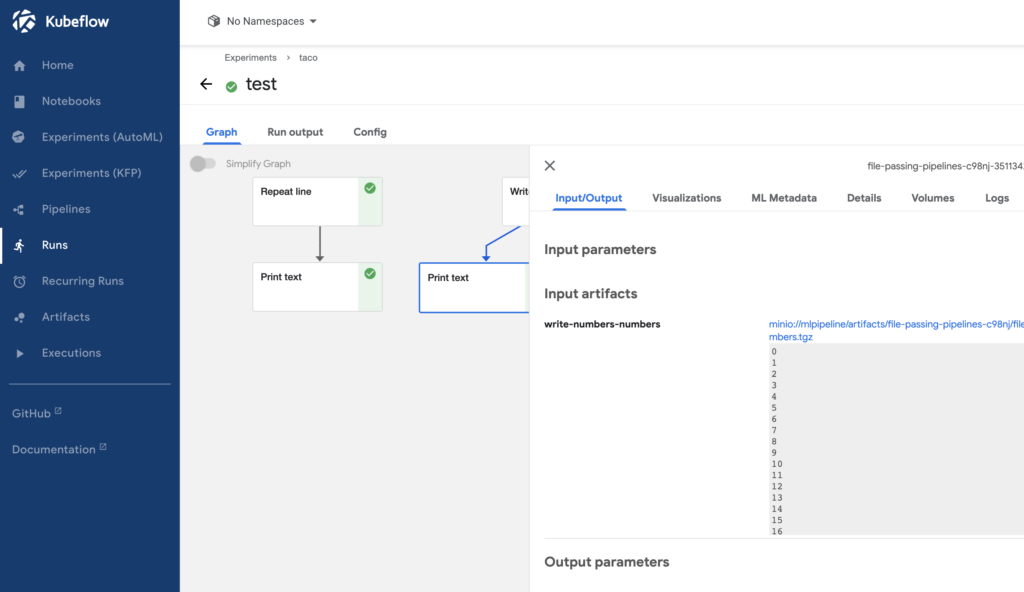

Kubeflow does have a fun, end to end Machine Learning workflow. This trains a model in Kubeflow to identify tacos and publishes that model to Azure Machine Learning Service Workspace.

https://www.kubeflow.org/docs/distributions/azure/azureendtoend/

kfctl

On the Kubeflow site the option for installation is to pull down the Kubeflow code from GitHub and use the Kubeflow kfctl to build and apply Kubeflow to your AKS cluster. This approach doesn’t work out of the box, during installation there are errors with services, which you have to try and either find a fix documented by the community or make changes to the Python and Go code yourself.

ArgoCD

The typical production path to automate the installation and maintenance of Kubeflow is to use ArgoCD. Similar to the kfctl approach, you need to apply fixes to services and tailor to suit the Azure ecosystem. ArgoCD does allow full control over ever aspect of Kubeflow especially security hardening of containers for zero day events etc hence the typical production path.

Juju

A third option is to use a charmed install using Juju provided by Canonical. Juju states its “the simplest way to install Kubeflow” and it certainly is within an hour of running through the install guide I was presented with the Kubeflow interface.

https://ubuntu.com/tutorials/install-kubeflow-on-azure-kubernetes-service-aks#1-overview

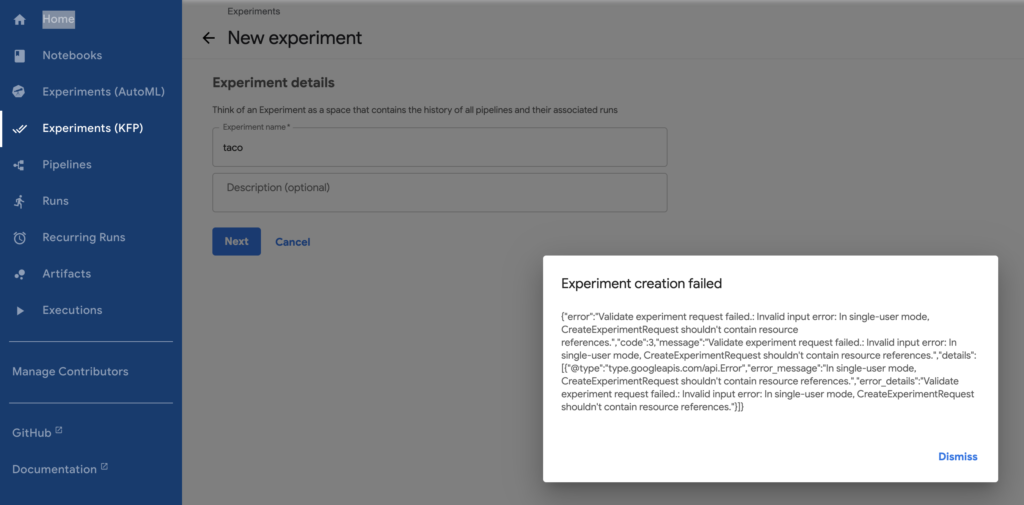

Juju is a “low Ops” approach, but it doesn’t work out of the box. You need to understand the relationships between Microservices. For example the above User Interface has a major known bug that prevents experiments from being run.

https://github.com/kubeflow/kubeflow/issues/6016

This issue means waiting until the community has resolved the issue and Juju provides an update.

Workarounds to this error I found were working locally with Kubeflow using the kfp command line.

To make the User Interface work I used juju remove-application kubeflow-profiles –force.

Posting this feedback to github and contributing to the open source community, a first for me!

For productionising Juju best approach would be to stand up a Juju controller and treat your AKS cluster as a Juju client. This provides a User Interface for install history and maintenance.